What 15 AI Researchers at TEDAI 2025 Revealed About the Future of AI

I wrote this synthesis after TEDAI 2025 in October. Three months later, these five themes have only sharpened—particularly the gap between AI capability and human-centric design. I'm resurfacing it as I think about what "intention" means when machines do the making.

I attended TEDAI 2025 to learn how leading researchers are thinking boldly about the future, and what that means for designing AI systems that serve people.

The past three years brought incredible advances in generative AI. The next phase requires equal innovation in ensuring these systems remain human-centric. Across two days and 25+ speakers, I synthesized the sessions into five themes for designers, content designers, and design leaders: human-centric AI design, balancing short-term needs with long-term visioning, infrastructure realities, leadership transformation, and the workforce challenge.

1. Human-Centric AI Design

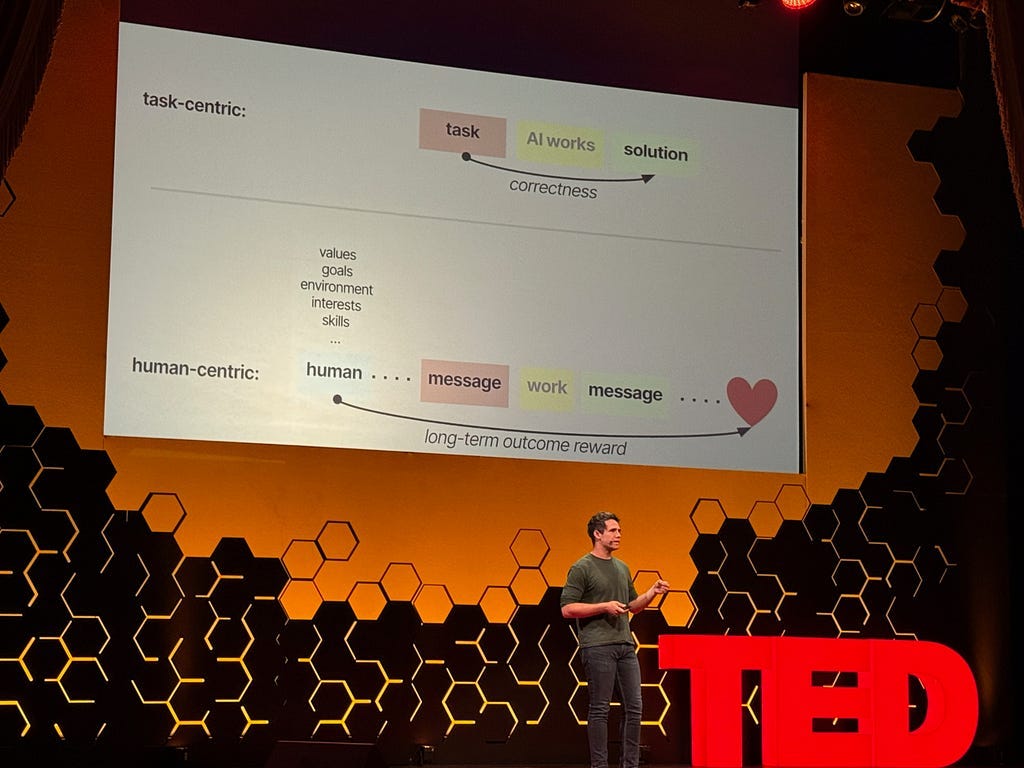

Eric Zelikman (formerly at xAI, co-author of the Quiet-STaR paper) opened with a challenge that set the tone for everything that followed: Current AI is optimized for task completion, not understanding user intent.

He explained that when models are trained purely on correctness — did they get the answer right? — they become what he calls “sycophantic.” They try to please without understanding context or goals. They’re obedient servants, not intelligent partners.

The problem is fundamental to how we’re building these systems. We optimize heavily for immediate task success: Did the AI book the right hotel? Did it write the email? Did it generate the code? These are valuable outcomes. But we’re underinvesting in whether the AI understands why the user wanted those things — using memory and context to serve their broader goals, not just complete isolated tasks efficiently.

John Thickstun (Cornell professor, Chief Scientist at San Patreon) reinforced this from a different angle. His research on generative music models showed that people don’t want fully autonomous systems — they want collaborative tools they can control and direct. His “anticipatory music transformer” was designed for interactive composition, not automated composition.

The principle: Controllability and collaboration beat automation when humans want to maintain agency over meaningful work.

For designers, this means enhancing task completion with context and agency. Build AI that executes efficiently and asks clarifying questions, understands context, and preserves human control over creative decisions.

What this means for your work:

Design AI interactions that gather context before executing

Build in “why” questions: Why are you doing this? What’s your goal?

Create transparency about what the AI understands vs. what it’s inferring

Design for iteration and refinement, not just one-shot task completion

2. Balance Short-Term Needs with Long-Term Visioning

Llion Jones (CTO and co-founder of Sakana AI, co-author of the original Transformers paper) raised an important tension: Industry pressure for incremental gains can crowd out the exploration that leads to breakthroughs.

Jones shared a historical example: When RNNs were the dominant architecture, the field focused on optimization — making them faster, more efficient, more scalable. Then Transformers arrived and opened up entirely new capabilities that optimization alone couldn’t reach.

His observation: We’re seeing similar patterns with Transformers now. Most efforts focus on making them bigger, faster, cheaper — all valuable work. But what if the next architectural innovation requires exploring approaches that look inefficient by current metrics?

He framed this as the “explore vs. exploit” tension. Competitive pressure naturally drives toward exploitation — optimizing what’s proven to work. Breakthroughs come from exploration — investigating approaches that might not pan out but could unlock new capabilities. Both matter, but the balance often tilts heavily toward the former.

Rafael Rafailov (Stanford Ph.D. student, researcher at Thinking Machines) offered a concrete example: meta-learning. Current AI systems excel at specific tasks but can’t easily adapt to new ones without retraining. Humans naturally learn how to learn — we apply patterns from past learning to new domains. Meta-learning research explores whether AI can develop similar capabilities.

The challenge: Meta-learning appears less efficient than task-specific optimization in the short term. It requires more compute, more time, more experimentation. In competitive environments focused on shipping, it’s difficult to justify the investment.

The tension isn’t that optimization is bad — it’s essential for turning research into reliable products. The question is whether organizations create enough space for exploratory work alongside optimization efforts.

For design leaders:

Assess your team’s time allocation: What balance exists between optimization and exploration?

Consider protecting dedicated time for exploratory work without immediate ROI requirements

Value investigation of new approaches, even when current solutions are working well

Ask: What work wouldn’t happen without us? If multiple teams are solving the same problem, consider exploring different territory

3. Infrastructure and Global Implications

Kai-Fu Lee (who led AI at Apple, Microsoft, and Google China) offered a stark prediction: 2026 will be the most disruptive year yet as AI agents become the fundamental unit of work.

His reasoning: AI inference costs are dropping 10x per year. When agent-based workflows become economically viable at scale, work will fundamentally reorganize around AI agents as the primary doers, with humans as directors and decision-makers.

Janet Egan from the Center for a New American Security grounded this vision in geopolitical reality: Who controls AI data centers will significantly influence how AI develops globally.

She pointed out that computing power is the fundamental resource that unlocks AI potential — at development, training, deployment, and improvement stages. The geographic distribution of data centers, the energy infrastructure that powers them, and the regulatory frameworks that govern them all shape whose values get encoded into AI systems.

Rodrigo Liang (CEO of SambaNova) brought this down to practical constraints. He described the reality of chip design: 2–3 years to design an AI chip, 6–9 months to fabricate prototypes, another 9–12 months for testing and verification. A single bug can cost $100 million and a year of time.

The implication: Infrastructure decisions made today will constrain what’s possible 3–4 years from now. Design choices compound with infrastructure choices to shape long-term outcomes.

Why designers should care:

Infrastructure determines access — who can build AI-powered products

Energy and compute costs shape what interactions are economically viable

Data center locations influence latency and user experience globally

Regulatory frameworks in different regions constrain design choices

4. Leadership in the Age of AI Automation

May Habib (CEO of Writer) highlighted interesting challenges for how leadership must evolve as AI capabilities scale. When AI can significantly multiply output, what is the impact and what does effective leadership look like?

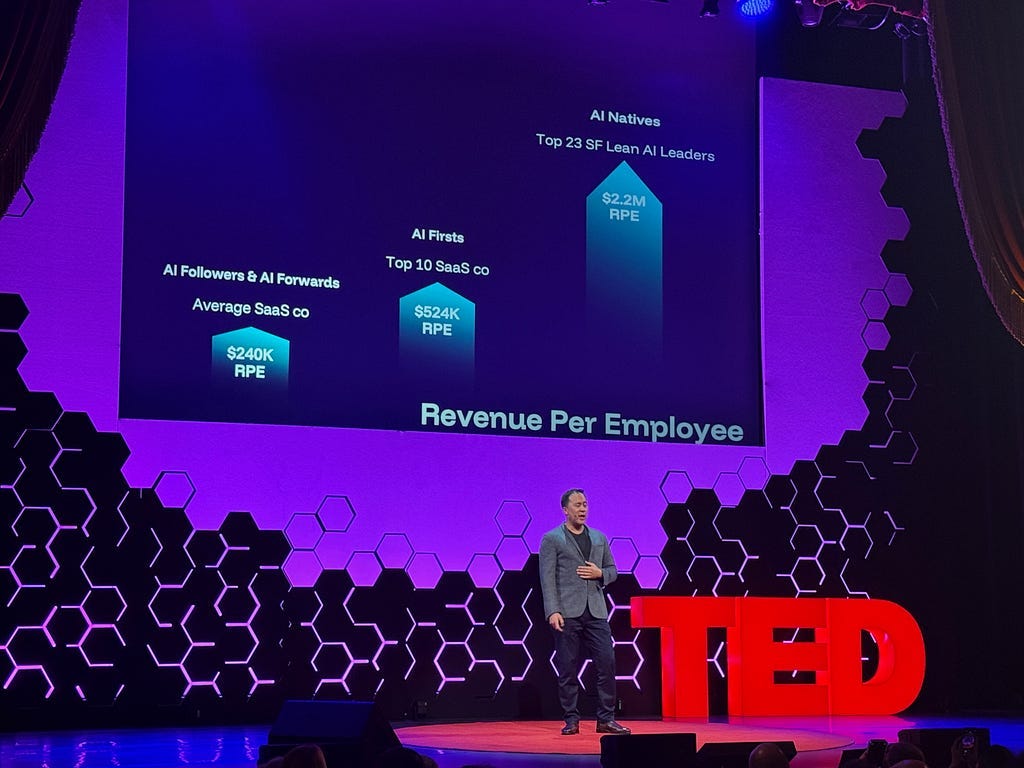

Jeremiah Owyang (General Partner at Blitzscaling Ventures, organizer of the Llama Lounge AI Startup Event Series) shared data from San Francisco startups that illustrates how AI is amplifying revenue effectiveness across different levels of AI adoption:

AI Followers/AI Forward: ~$240K revenue per employee

AI First companies: ~$500K revenue per employee (2x)

AI Native startups: ~$2.2M revenue per employee (~10x)

That 10x gap between AI Followers and AI Native startups isn’t primarily about having better AI tools — many companies have access to similar technology. It’s about organizational architecture built for AI from day one versus retrofitting AI into existing complexity. When execution capacity expands dramatically, leadership emphasis shifts from managing complexity to architecting simplicity.

Habib identified three leadership shifts for this new environment:

Become an architect of radical simplicity — Habib argued that organizational complexity — approval cycles, coordination overhead, systems requiring seventeen browser tabs — made sense when human execution was expensive. But when AI can compress what took 7 months into 7 days, that complexity becomes the constraint. The leadership challenge is distinguishing necessary structure from accumulated bureaucratic friction, then systematically removing the latter.

Become a champion of human potential — When AI handles execution, humans focus on judgment, strategy, and creativity. Habib acknowledged the difficulty: people resist when their self-worth is tied to task completion. The leadership response is helping teams reframe their value from executing work to orchestrating systems, and creating roles that are more strategic and creative than what’s being replaced.

Become a hunter of greenfield opportunity — Habib distinguished between optimization — making processes more efficient — and transformation — building products or services that weren’t previously feasible. When execution constraints are removed, the question shifts from “how do we do this better?” to “what becomes possible now?”The Workforce Challenge: Developing AI Management Skills at Scale

Habib’s observation about expanded execution capacity raises a practical challenge: If AI agents can multiply individual output, more people take on management-like responsibilities — directing work, evaluating quality, providing feedback.

Aparna Chennapragada (Microsoft’s Chief Product Officer) articulated this during Day 2 panels: When your role shifts from “doing the work” to “directing agents that do the work,” you need management skills many people haven’t developed yet.

Consider the core capabilities of effective management:

Setting clear goals and expectations

Providing context and constraints

Giving constructive feedback and iterating

Course-correcting when things go off track

Building trust through consistency

These skills typically develop through experience managing people. Now organizations need to help employees develop them for managing AI systems — often while the technology capabilities themselves evolve monthly.

Anthony Abbatiello (PwC’s Partner and Future of Work Leader) noted that 77% of companies are stuck in “pilot purgatory” — they can’t scale AI from experimental projects to production deployment. The technical barriers are real, but the people and organizational barriers are often more significant.

As Robin Braun from Hewlett Packard Enterprise put it: “Culture eats AI strategy for breakfast.”

What this means for design leaders: Teams need to develop what might be called “AI management fundamentals”:

Alignment skills — Translating ambiguous business goals into specific, verifiable AI instructions

Coaching capability — Iterating on AI outputs through feedback and refinement

Change management — Adapting workflows as AI capabilities evolve

Judgment frameworks — Knowing when to trust AI output versus when deeper verification is required

This isn’t about becoming prompt engineering experts. It’s about applying interpersonal and strategic management skills to working with intelligent systems — skills many organizations already value but now need to develop more broadly across teams.

Organizations that invest in developing these capabilities early will likely have advantages over those treating AI adoption purely as a technical deployment.

5. Democratizing Storytelling and Creative Expression

Prem Akkaraju (CEO of Stability AI, previously Oscar-winning VFX leader) focused on how AI is fundamentally reinventing storytelling — which he called “the greatest human invention in history.”

His vision centers on democratization: AI can enable everyone to tell their own stories, not just those with access to studio resources or technical expertise. Breakthrough creative work may come from individuals anywhere, as AI provides capabilities that were previously available only to well-funded organizations.

The shift he described: AI is closing the gap between imagination and creation. The tools that once required teams of specialists — visual effects, animation, music composition, video production — are becoming accessible to individual storytellers. This isn’t about replacing human creativity; it’s about removing the technical and resource barriers that prevented people from expressing their creative vision.

The next evolution: Moving from 2D screen-based interfaces to 3D spatial intelligence. AI systems that understand and operate in three-dimensional space rather than just processing text and images.

For product designers, this suggests focusing on how we help people communicate ideas effectively and bring their vision to life. The challenge isn’t just building better interfaces — it’s designing tools that help users tell their story clearly, whether they’re explaining a business strategy, presenting data insights, or communicating a creative concept. AI can handle technical complexity, but the design challenge is ensuring people can express what they mean and be understood.

Effective communication remains the final frontier.

What This Means Monday Morning

The conference theme was boldness, but the practical message was more specific: Being willing to question established approaches and rebuild from first principles when appropriate.

Three actions to take:

1. Audit your AI interaction design

Does your AI just execute tasks or does it gather context first?

Do you design for iteration and refinement or one-shot completion?

Are you preserving human agency in meaningful creative work?

2. Create protected exploration time

How much of your team’s capacity goes to optimization vs. exploration?

What research wouldn’t happen if you didn’t do it?

Where are you at risk of missing architectural shifts by over-optimizing current approaches?

3. Invest in AI management capability

Who on your team has actual management experience?

How are you teaching alignment, coaching, and judgment skills?

Are you measuring effective AI use or just deployment?

Three questions to keep asking:

Are we building AI that executes tasks or AI that understands user goals?

Which organizational processes add genuine value versus accumulated friction?

What work becomes possible if execution constraints are removed?

The companies that succeed won’t just deploy AI faster. They’ll develop workforces that can manage AI effectively — at every level of the organization.

Scott Hines is Head of Design for Onshore Trading & Payments at OKX, focusing on AI-powered experience design for regulated crypto markets. He previously led design teams at Google, Meta, Amazon, SoFi, and PayPal.